|

Celebrating IU's supercomputing evolution Celebrating IU's supercomputing evolution

The systems we deliver and the software we support has changed drastically since 1955 and continues to change as new discoveries in information technology occur. What have not changed are our basic philosophy of service and the commitment of University Information Technology Services and the IU Pervasive Technology Institute to support the IU research and academic community in innovation and creativity. As of today, people who use the Big Red II supercomputer represent more than 150 disciplines of scholarly and artistic endeavor practiced at Indiana University – this is quite a milestone!

Read more from PTI Executive Director Craig Stewart

Supercomputing for Everyone Series: Research Services Expo and Peebles Memorial Lectures in Information Technology Supercomputing for Everyone Series: Research Services Expo and Peebles Memorial Lectures in Information Technology

Learn about IU resources that can help you advance, from supercomputers and big data storage to grant services and library resources, we've got what you need.

April 16 in the Herman B Wells Library

9:00-10:30am Peebles Memorial Lecture - Featuring Dr. Felix Bachmann, Carnegie Mellon University – "Is Software Engineering Obsolete?"

10:30am-2:00pm Research Services Expo

We'll be celebrating Big Red II meeting the 150+ discipline goal by giving away Supercomputing for Everyone superhero t-shirts to the first 100 attendees to visit four of the 20 stations at the expo.

Click here for more information about the lecture series and the Research Services Expo

About Big Red II About Big Red II

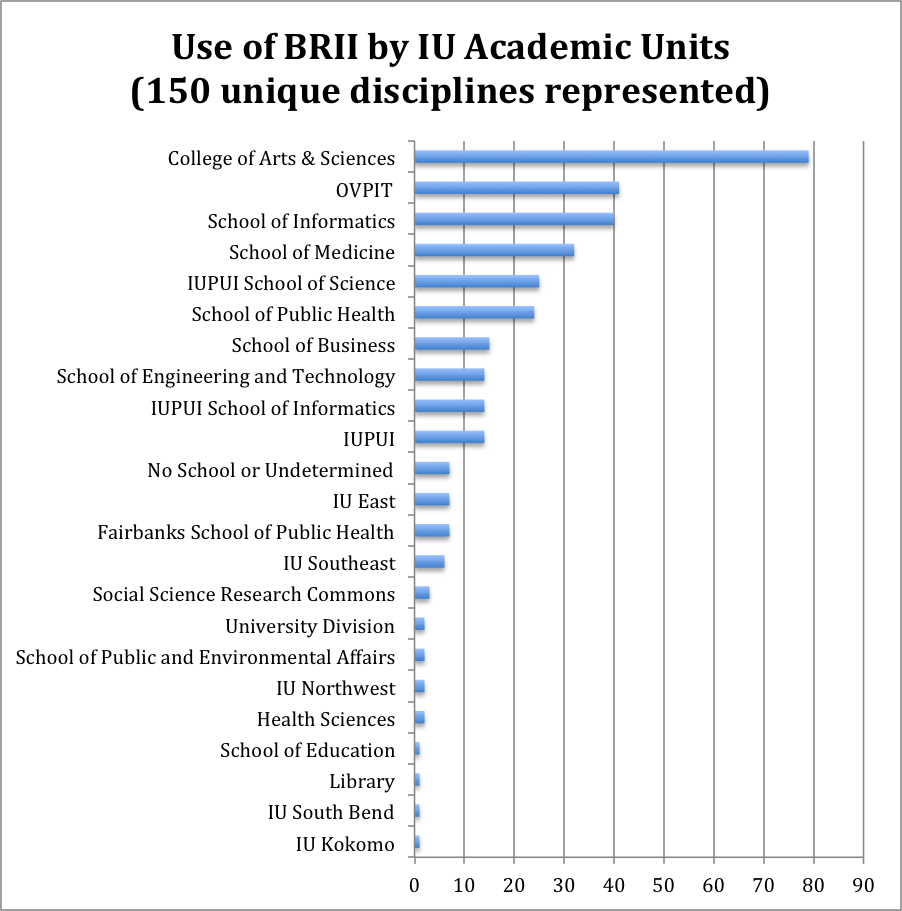

IU's Big Red II supercomputer (BRII), launched two years ago in April, 2013, was the nation's first university-owned, one-petaFLOPS system. IU purchased the system for exclusive use by researchers on all university campuses. At that time we set a goal of reaching 150 disciplines in three years, with the intent of enabling research in areas outside traditional hard sciences. In January 2015 this milestone was met, marking an early achievement on an ever-growing resource. The chart below shows the pervasive use of the system by arts and humanities disciplines, business, public health, and informatics. And the list continues to grow.

Use of Big Red II by IU academic units for the time period August 2013 to December 2014. *Many disciplines report across multiple IU academic units.

Big Red II has also served as a springboard to the use of major national systems. For example, Steve Gottlieb used huge amounts of time on Big Red II's GPU nodes to optimize and tune Intel Many Integrated Core (MIC) Architecture code to run on the Nvidia GPUs. Gottlieb also used this work to successfully request time on the NSF Blue Waters system and the DOE Cray supercomputer (called Titan) at Oak Ridge National Labs. Gottlieb and the MILC consortium have an allocation of more than 30-million node hours on Blue Waters – the single largest award on this system. (The second largest allocation is 18 million node hours). Based on IU internal core hour rates, the value of this award is roughly $3M – and IU's core hour charge is less expensive than Blue Waters core hours. There is a large award in review for use of Titan as well through the DOE Innovative and Novel Computational Impact on Theory and Experiment (INCITE) program.

Following are selected highlights of the research that involved Big Red II. Along with scientific research, Big Red II aids economic development in the State of Indiana whereby businesses can take advantage of unused cycles during lull times to advance their research. (See the highlight on Cummins Engine, Columbus, IN, below.) In addition, Big Red II users brought in more than $64.4M in federal funding in fiscal year 2014. Click here to see the chart showing amounts and areas of funding. Another important asset of this research is the data that is generated. Data that can be stored, retrieved and used by other researchers is invaluable in advancing knowledge and serves as a building block for future research. BRII offers storage options on Data Capacitor II, Scholarly Data Archive and the Research File System. Storage covers a wide array of options, archival, real-time for collaboration and temporary high-speed to move large data sets across the network.

Finding the sources of Alzheimer's disease

Nearly 44 million people worldwide may be living with Alzheimer's disease or other dementias, according to the BrightFocus Foundation. Since 2005, the Alzheimer's Disease Neuroimaging Initiative (ADNI) has been studying genetic and environmental causes of Alzheimer's. A study by Dr. Andrew Saykin, Raymond C. Beeler Professor of Radiology and director of the IU Center for Neuroimaging, involves the entire genomes of 818 volunteers. Saykin first needed to assemble the raw genetic data on each volunteer into a full and properly aligned genome. On a standard scientific workstation this would take about two weeks for each genome, or 400 months for the whole study. Using Big Red II, Saykin sequenced these genomes in roughly eight months using up to one Petabyte of data storage. "Data sets of unprecedented scope can facilitate new discoveries regarding the brain, genome, disease and therapies but computational power has become a major bottleneck to scientific progress," said Saykin. Saykin can now relate the genetic sequences of healthy individuals and Alzheimer's victims to their genes, then use brain scans and behavioral data to track the disease's progress.

Pasta and waffles and neutron stars? Pasta and waffles and neutron stars?

The structure of the interior of neutron stars provides clues that could help develop stronger materials like burr-inspired Velcro and or make an impact on the energy industry. A neutron star is the remnant of a massive star after a core-collapse (or Type II) supernova at the end of its life. What's left? A compact, super-dense ball of nuclear matter with more mass than the sun in a sphere with just a 10-kilometer radius. In the inner crust of a neutron star is a layer dubbed "nuclear pasta," which, compressed to 1014 grams per cubic centimeter (100 trillion times more dense than water), forms waffle-like layers, 10-billion times stronger than steel. The detailed form of matter at this depth also determines many of the overall properties of a neutron star. By running large-scale molecular dynamics simulations on Big Red II, IU Physics Professor Chuck Horowitz and colleagues can follow the interactions of individual neutrons and protons in this layer. With further study of the thermal conductivity of the star and the structure of its interior, physicists envision pursuing new material and energy applications.

Big Red II's GPUs help Cummins improve diesel engines Big Red II's GPUs help Cummins improve diesel engines

Diesel engines are a major source of greenhouse gases and nitrogen-bearing compounds. Cummins Inc. (Columbus, IN), which sold 1 million diesel engines in 2014, is committed to increasing their fuel efficiency while decreasing pollutants. A 5% increase in fuel efficiency for one 18-wheeler produces 14 fewer tons CO2 per year and saves $4000 in fuel costs per year.

Diesel fuel combustion involves hundreds of different chemicals and thousands of chemical reactions. Cummins engineers are using sophisticated computer simulations to help improve the design of the engines' pistons and combustion chambers. It takes GPU-based computing to handle such detailed reaction mechanisms. A partnership of Lawrence Livermore National Laboratory, Indiana University, and Cummins is adapting software from Convergent Science.

|